Have you ever done your grocery shopping online and then received a customer survey to your inbox a few days later? Did you take the time to fill it in? Did you mean-well and think I’ll come back to that later – and never did. Did you start it – and then give up by the time it asked you to rate the colour of your delivery drivers underwear? Or did you notice the incentive of £5 off your next shop and persevere to the end.

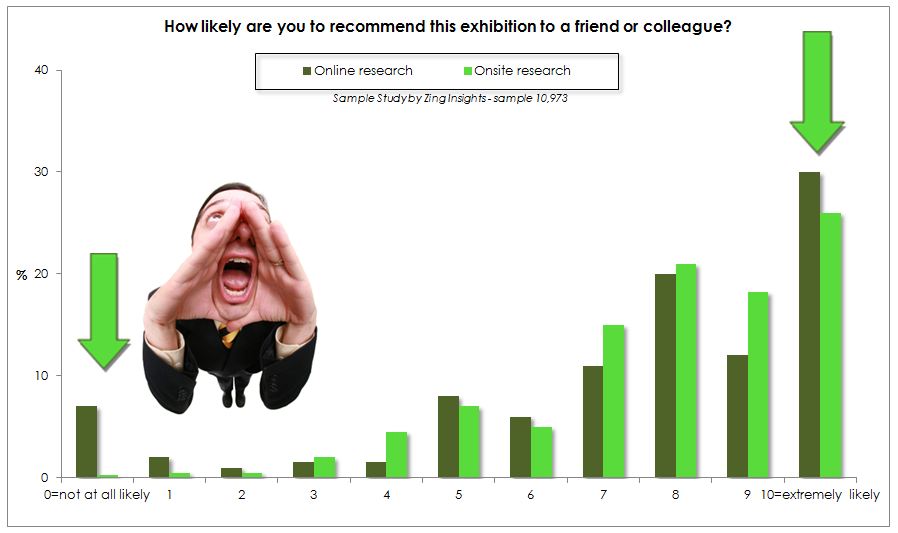

Zing Insights undertook a study of all the projects we researched within the events sector over the last 6 months including a variety of large-scale trade shows and consumer exhibitions. In many instances we had data both from research conducted offline (i.e. onsite at the event with visitors and exhibitors) and also post-show via online surveys – 10,973 interviews in total. In the majority of cases, we found that the samples were radically different. Our conclusion was that individuals who participate in online surveys are far more polarised than the wider audience i.e. it is the biggest advocates and the loudest complainers that are taking the time to complete online surveys. Below is a chart illustrating a typical data example. The online sample (dark green) are more than ten times as likely to be complaining about the show (rating it a zero on the net promoter scale) and are significantly more likely to be a promoter (rating it 10 on the net promoter scale).

So what does this mean? Essentially it means that the views and opinions you are getting from your online sample are not representative of your wider audience at your exhibition. The magnitude of this depends on what you are using your online surveys for. If the purpose of your online surveys is to simply track your events performance from one year to the next – then this isn’t a major problem. Obviously it means that online scores are likely to be less favourable overall – in some instances we have seen online scores to give net promoter score up to 36 points below on-site scores. However, if your online survey data is influencing business decisions – if you are basing your marketing strategy and event development on online research results – this is more of an issue.

In conclusion, don’t be fooled into thinking that your online sample is representative of your overall audience – it is most likely the views of a combination of your strongest promoters and strongest detractors and missing joe average who probably make up the majority of your audience and is most influenced by slight tweaks to your marketing strategy and event development. And this is assuming that the database you are using for your survey mailing isn’t inherently biased already e.g. advance ticket holders. There are ways to make your online sample more representative. Weighting the data is one measure you can take – but this isn’t possible in most DIY survey softwares as it’s a complex statistical process and one size doesn’t fit all. Incentivising is another – but you need to carefully consider your incentive – this is more important than ever in a time when we are deluged with so many requests for online surveys and can easily influence and bias those who do decide to respond.

Zing Insights is an award winning market research and insights consultancy run by a team of highly skilled research professionals with over 40 years experience of delivering world class business insights and specialist experience of events and exhibitions research. Since launching in 2011, Zing have worked with an impressive list of clients including; Spring Fair, Pure London, Farnborough International Airshow, BBC Good Food Shows, Autosport International, National Home Improvement Show, Taste of London, Food Ingredients Europe, RWM, and Marketing Week Live. For more information contact hello@zinginsights.com

Posted by: Jo Walther